Why history is repeating itself — and what to do before it does.

With the continued rapid pace of AI development and advancements in the capabilities of AI tools, spooked investors turned away from traditional SaaS stocks earlier this year, as a result of the release of Claude cowork. Investors panicked that AI tools like Claude would render traditional enterprise SaaS companies obsolete triggering a broad selloff that hit Salesforce, Microsoft, Workday and others, as markets concluded that if AI could do the work, the software licences sitting underneath it were suddenly much harder to justify.

So, are we entering a new era where software companies are facing obsolescence, where each individual or team will be creating their own software for their own purposes? Have the advances in AI meant that we’ve forgotten the lessons of the past, only for us to have to learn how to manage them all over again? Have Claude and similar AI tools become the new MS Access of our era, and if so, what steps should you be taking now to make sure history doesn’t repeat itself?

There are a surprising number of similarities between where we are today with AI and the mistakes organisations made managing the MS Access databases of old, and for the question on obsolescence the short answer is: no.

The large software companies that investors were fleeing are not standing still, they are embedding AI into their own products, accelerating development cycles and deepening the domain expertise that made them market leaders in the first place. A company that has spent fifteen years building a finance platform, with deep regulatory knowledge, established data models, and thousands of customer feedback loops, will still produce a better finance product than a generalist team armed with an AI coding tool. Specialist knowledge compounds.

The real risk AI introduces is not that these companies disappear, but that organisations bypass them to build their own versions, losing the standardisation, best practice, and regulatory alignment that mature software enforces by design. These are the same reasons why business processes moved away from individually developed MS Access databases and why most companies who could build their own financial platforms pre-AI choose not to. The question is not whether AI replaces enterprise software. It is whether organisations will govern the tools being built alongside it.

For those of you who experienced enough to remember IT in the 90s and early 2000’s you may remember a scenario such as this, somewhere in every organisation of meaningful size, a resourceful employee had discovered Microsoft Access. Within a few weeks they had built something genuinely useful, a client tracking tool, a stock reconciliation model, a way of automating a report that previously took half a day. Their manager was impressed. Their colleagues started using it. Nobody in IT knew it existed.

Fast forward eighteen months. That tool was processing a meaningful slice of the business. It had no backup. No documentation. No version control. No security review. No plan for what happened when its creator was promoted, moved departments, or left entirely. And when something eventually went wrong, because something always went wrong, the business discovered just how load-bearing this simple MS Access solution had quietly become. Early in my career we discovered one such solution because the office cleaner had switched off the desktop it was running on!

Enterprise IT departments spent the better part of a decade cleaning up the MS Access era. They built shadow IT policies, governance frameworks, procurement controls. The problem was addressed. And then, with the arrival of generative AI, the clock was quietly reset to zero.

“The barrier to creating software has collapsed. The barrier to deploying it responsibly has not moved an inch.”

What has actually changed

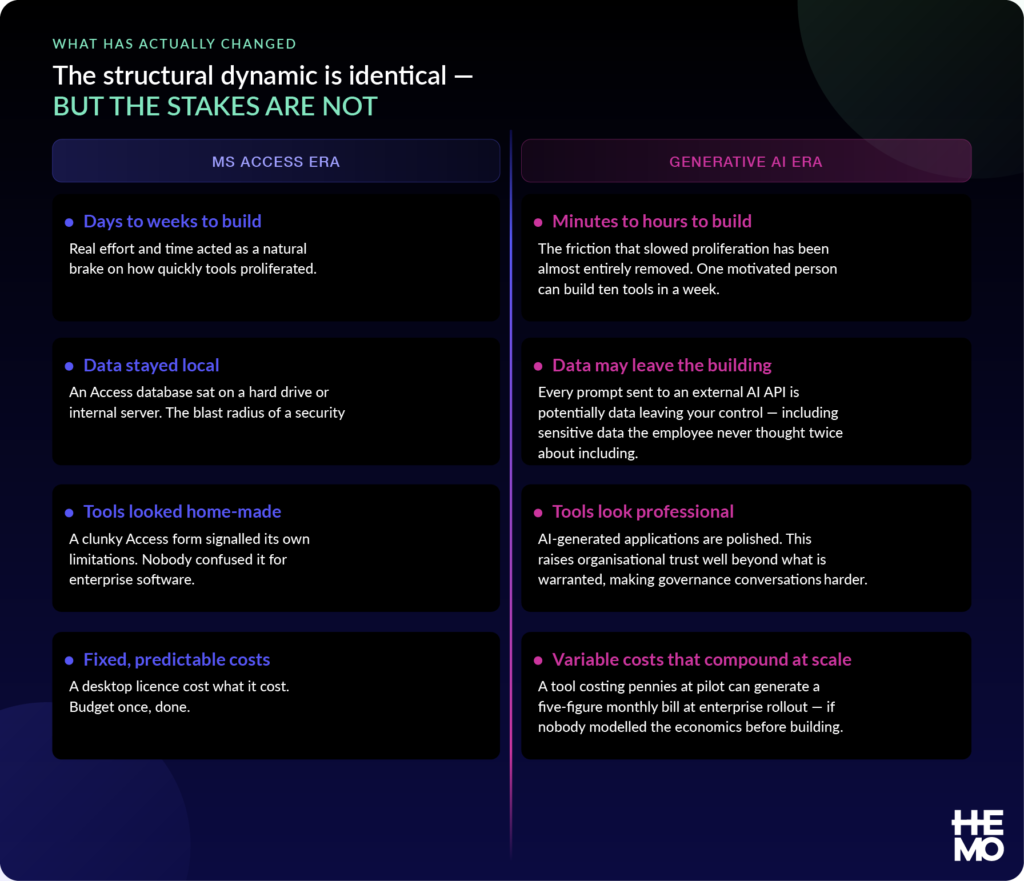

The new structural dynamic is identical to the MS Access era: motivated people solving real problems outside formal processes, with tools that become critical before anyone in governance has heard of them. But several properties of the current new AI era make the risk materially greater.

There are however, differences with the AI tools today where the technology creating the issues can also be used to help solve and manage some of the risks. Code is inspectable. Documentation can be generated on demand. Version control culture exists. The organisations that move now, before their AI tool estate becomes unmanageable, will find the tools to do so are built into the technology itself, and the job considerably easier than those who wait for a crisis.

The cost model nobody has budgeted for

Of all the ways AI tools differ from the software enterprises are used to managing, the cost model is the most underestimated. Traditional enterprise software is predictable — SaaS charges per user per month, infrastructure is licensed by capacity — finance can budget for both. AI API pricing works on entirely different logic: you pay per token, per call, per run. The meter runs faster with more users, more frequent use, more data per request, and more capable models. There is no fixed ceiling. There is only consumption.[LC2]

Why this matters at scale

A document summarisation tool processing one page per user per day costs approximately $18 per month with 20 users on a mid-tier model. The same tool at 2,000 users costs $1,800 per month. Add a premium model, longer documents, and frequency creep — users running it five times a day instead of once — and that figure reaches $45,000 per month. None of this is visible at the point of building. All of it is predictable with the right modelling.

Three cost dynamics compound the problem in ways traditional software procurement has never encountered.

- Frequency creep: a tool built for weekly use becomes daily, then multiple times per day. In a per-seat SaaS model this does not affect the bill. In an AI API model it can multiply costs by a factor of five before anyone notices.

- Model selection drift: builders default to the most capable — and most expensive — model available. The difference between a lightweight model and a premium model for the same task can be twenty-five times the cost per token. Almost nobody defaults to the cheaper one without explicit policy.

- Prompt inefficiency compounds both: a tool that passes an entire document to the AI when it only needs three paragraphs is spending five to ten times what it needs to on every call. Entirely invisible until someone examines the API logs.

| Pilot — 20 users | Team — 200 users | Enterprise — 2,000 users |

| $180 / month | $1,800 / month | $18,000+ / month |

The governance implication is clear: every AI tool intended for more than a small team needs its economics modelled at enterprise scale before approval — not discovered on the first bill after rollout. Most organisations have not built this discipline yet.

The question that finds the ones that matter

Beyond the financial risk, the operational risk of ungoverned AI tools follows the same pattern as the Access era. A single question reliably surfaces the tools carrying the most risk: “Would your business be disrupted if this stopped working tomorrow?”

The answer surfaces what no technical audit easily finds, the tools that started as a personal convenience and became quietly critical. The reconciliation script someone runs at month-end. The summarisation tool the sales team uses before every major pitch. The classification engine routing customer queries to the right team.

The pattern to recognise

A tool described as “just for my team” this quarter will often be “we can’t run the month-end close without it” within twelve months, if it works well enough. The question is not whether your employees will build useful things with AI. They will. The question is whether your organisation will know about it before it becomes critical with an unmanageable price tag.

Why banning doesn’t work…and never has

The most common first response to AI governance risk is a blanket prohibition. No external AI tools. No Claude. No ChatGPT on company devices. Problem solved.

It is not solved. It is hidden.

Every organisation that has attempted a broad AI ban has discovered the same outcome: the ban reduces what IT can see, not what employees actually do. Personal devices, personal accounts, tools that do not look like AI tools from the outside. The behaviour continues, it just becomes invisible to governance, which makes it considerably more dangerous than if it were in the open.

The answer is not prohibition. It is governed innovation: making the right path less effortful than doing it unofficially. Compliance works when it is easier than non-compliance, not when it is enforced by fear of being caught.

The five things that have to work together

Getting this right requires five mechanisms operating in parallel. Most organisations have none of them. Some have one. The ones managing AI adoption well are beginning to build all five.

1. Classify before you build

Not all AI tools carry the same risk. A personal productivity tool one person uses to draft their own emails is categorically different from a tool processing customer data and feeding into a regulated reporting process. The classification question that matters is not technical, it is operational: who uses it, what data does it touch, and what happens if it stops working? Those three answers place any tool in the right risk tier and apply proportionate controls accordingly.

2. Capture innovation before it becomes infrastructure

The submission of an AI tool idea should be as easy as posting in a Slack channel. Three capture mechanisms are needed: a low-friction ideas channel positioned as innovation rather than compliance; an automatic prompt within the sanctioned AI platform that triggers when someone moves from conversational use to creating something reusable; and a manager referral path for ideas that surface in team conversations but never become formal submissions.

3. Run the economics before you approve

Every non-trivial AI tool submission needs to be modelled at pilot, team, and enterprise scale before approval. The benefit-to-cost ratio at each scale should be part of the decision. The cost optimisation levers, using a lighter model, passing less data per call, caching repeated outputs, capping daily usage, should be applied before full rollout, not after the first budget conversation where someone asks why the API bill quadrupled.

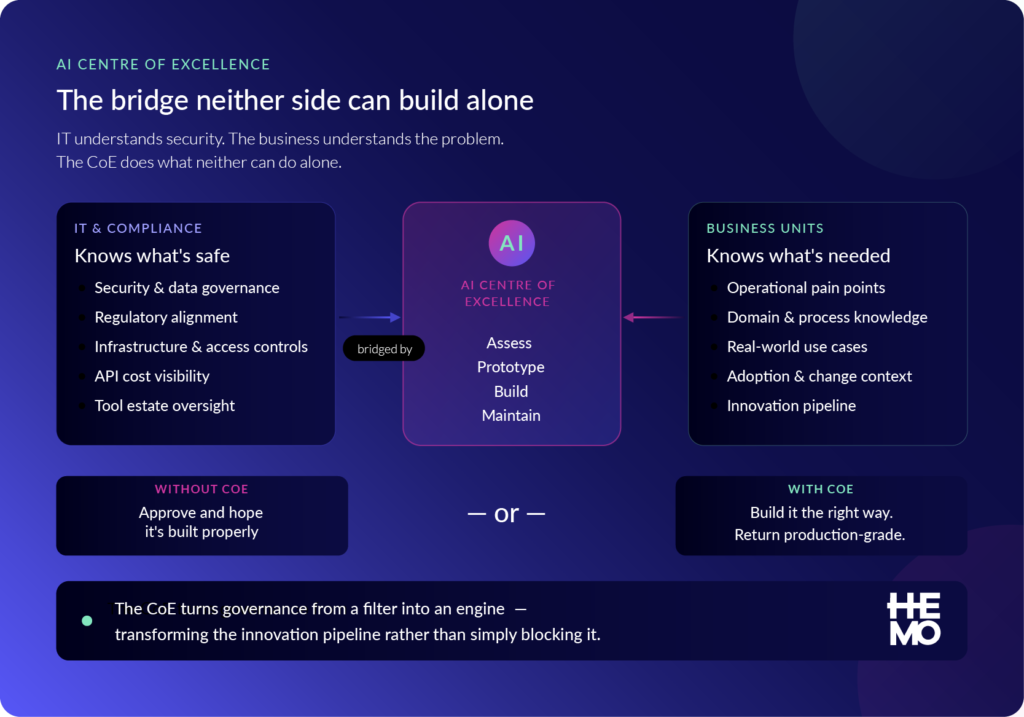

4. Route good ideas to an AI Centre of Excellence

Governance without capability is just bureaucracy. The organisations getting this right are not only reviewing ideas, they are acting on the good ones. An AI Centre of Excellence, staffed with the technical and domain expertise to assess, prototype, and properly build tools that pass the viability gate, transforms the innovation pipeline from a filter into an engine.

The CoE serves a function that neither IT nor the business unit can perform alone. IT can assess security and compliance. The business unit understands the operational problem. The CoE bridges both, translating a compelling idea into a properly governed, cost-optimised, maintainable solution the business can actually rely on. It also provides the institutional memory that keeps the tool estate coherent over time, preventing the duplication and fragmentation that characterised the Access era.

What a CoE changes

Without a CoE, the steering panel has two options for a good idea: approve it and hope it gets built properly, or decline it and lose the innovation. With a CoE, there is a third option: take it on, build it the right way, and return a production-grade tool to the business. That third option is what makes the governance model a competitive advantage rather than a constraint.

5. Educate the business, continuously, not once

Every other element of this framework depends on employees understanding enough to engage with it correctly. And here is the inconvenient truth: most employees using AI tools today have received no structured guidance on what is and is not appropriate. They are making judgements about data sensitivity, model selection, and tool sharing based on instinct and instinct is not a governance policy.

Education in this context is not a one-day training course. It is an ongoing programme that evolves as the technology evolves, addressing all three levels: all employees need to understand what data can and cannot go into an AI prompt and what happens when they submit an idea; team leads need to spot ungoverned tools and sense-check economics before ideas reach the steering panel; and the steering panel and CoE need to evaluate submissions on risk, viability, and strategic fit while keeping pace with model capability changes.

An intake form that nobody submits to is paperwork. An ideas board that employees do not trust is a box-ticking exercise. Education is what makes every other element of this framework function — not a soft complement to the hard controls, but a precondition for them.

Five questions to ask your organisation this week

1. Do you have a live, accurate inventory of AI-built tools currently in use across your organisation? If the answer is uncertain, you almost certainly have ungoverned tools in production right now.

2. Does your data handling policy explicitly address what can and cannot be included in an AI prompt? If not, employees are making that judgement individually and some of them are getting it wrong.

3. Has the cost of any AI tool in your organisation been modelled at twice and ten times its current user base? If not, you have an unbudgeted liability hiding in your adoption numbers.

4. Is there a named business owner for every AI tool that touches business data or business processes? Named ownership is the single most important governance control and the one most frequently absent.

5. Does your organisation have a credible place for a good AI idea to go, somewhere it will be properly evaluated, built, and maintained? If not, your governance model is a filter with no funnel.

The window for proactive governance is now

The MS Access era took organisations a decade to clean up because the tools were already load-bearing before the problem was recognised. The AI era is moving faster. The estate grows more quickly. The cost surprises arrive sooner and the window for proactive governance, while the number of tools is still inventoriable, while the habits around submission and review are still forming, while the education programme can still shape behaviour rather than correct it, is shorter than it was twelve months ago.

The organisations that will manage this well are not the ones that react to a governance crisis. They are the ones building the framework now: establishing the capture pipeline, standing up the CoE, running the economics before approving the builds, and investing in the education that makes every employee a participant in governance rather than a risk to be managed around.

Governed innovation is not the cautious option. It is the option that actually enables speed at scale, sustainably, and without the decade of clean-up that followed the last time we let this run ahead of us.

AI is here to stay, its impact has been seismic, and there are risks associated with the adoption of AI that we have already learnt how to manage, but have so far forgotten to apply. So, while far more advanced, Claude and other AI tools, have the potential to re-create the same issues organisations had to deal with when business users were allowed to innovate on MS Access.