Conversion rate optimisation has a methodology problem.

Not a tools problem. Not a traffic problem. The frameworks most teams are working from were built for different markets, different audiences, and different buying behaviours. Apply them without adaptation in a market like the UAE or broader GCC, and you’re left with a lot of guesswork and extra steps.

The uncomfortable truth is that most CRO programmes are busy. They’re running tests, reviewing heatmaps, debating button colours. But busy and effective are different things. And in a region as demographically complex as this one, the gap between the two is wider than most teams realise.

Here are the misconceptions we see most often, and why they’re particularly costly here.

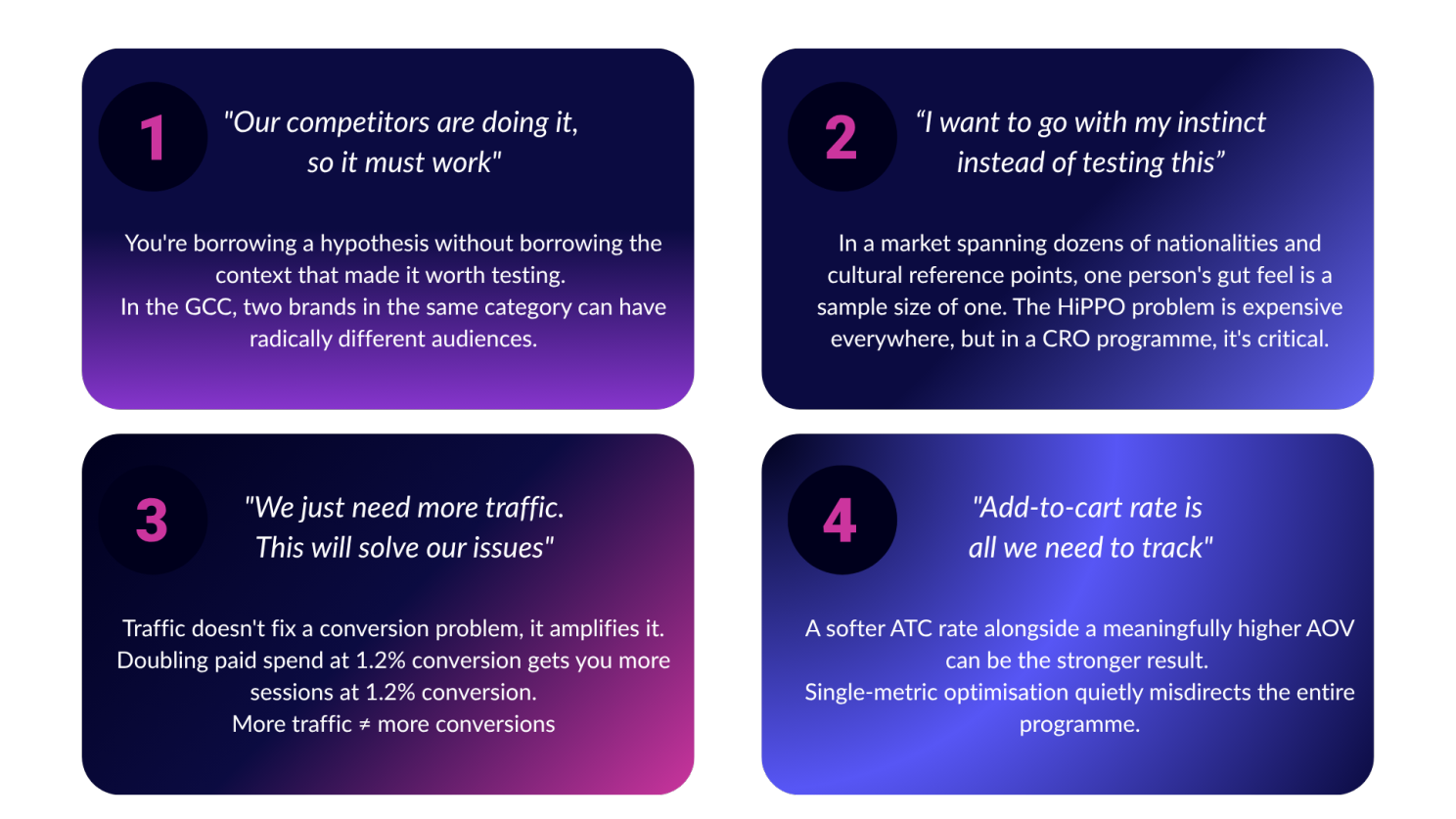

“Our competitors are doing it, so it must work”

Competitor observation is useful. Competitor copying is dangerous.

It’s tempting to look at what a category leader is doing, their layout, their promotional mechanics, their checkout flow, and assume there’s proven signal there. Sometimes there is. But in the GCC, two brands operating in the same vertical can have radically different audience compositions. Different nationalities, different income brackets, different language preferences, different relationships with digital commerce altogether.

What converts for them may not work for your customers. You’re not looking at a proven playbook, you’re looking at someone else’s experiment with no visibility into whether it’s actually working for them either. You’re borrowing a hypothesis without borrowing the context that made it worth testing.

This plays out most visibly in fashion and beauty, the GCC’s two largest and fastest-growing e-commerce categories. A defining feature of the GCC market is its demographic diversity, and brands looking to scale must resist treating the Gulf as a single, uniform market. Consumer behaviours differ widely by country and city, influenced by cultural norms, local heritage, and existing retail practices [Campaign Middle East].

Saudi consumers respond strongly to heritage-driven storytelling aligned with national transformation initiatives, while UAE shoppers often pursue a more globalised luxury experience, influenced by tourism and expatriate populations. Two brands in the same category, running the same CRO playbook, can get completely opposite results because their audiences are different people with different purchase drivers.

Electronics tells a similar story. Fashion, beauty, and online grocery delivery are leading e-commerce growth in the UAE [Bain & Company], but the trust signals, urgency triggers, and decision-making patterns that move one category don’t automatically transfer to another. What builds purchase confidence for a considered electronics buy is not the same as what converts in beauty or apparel.

Before you borrow anything from a competitor, ask more useful questions:

- do we share the same customer?

- can we experiment this feature before rolling it out?

- how will this feature change the experience of our customer?

“I want to go with my instinct instead of testing this”

Instinct is experience compressed. It has real value, but only when the experience it draws from is relevant to the problem at hand.

In a market where your customer base can span dozens of nationalities, languages, and cultural reference points, one person’s gut feel is a sample size of one. This is where the HiPPO problem, decisions driven by the Highest Paid Person’s Opinion, becomes genuinely expensive:

- Seniority doesn’t equal market representation.

- Confidence should not be a substitute for data.

We’ve seen this pattern play out more than once. A team hypothesises that trust and reassurance are the priority at a particular point in the purchase journey. It feels obvious, “of course customers need to feel confident before they commit”. But when tested, the data tells a different story entirely: customers at that stage had already resolved their concerns and were more responsive to inspiration than reassurance. The assumption wasn’t wrong in principle. It was wrong for that audience, at that moment.

That gap, between what feels right and what the data shows, is the most expensive gap in CRO. And in a market this diverse, it’s wider than most teams expect.

“We just need more traffic”

This one tends to come from e-commerce heads under pressure on revenue targets, and it’s understandable. More traffic feels like a lever you can pull quickly.

But traffic doesn’t fix a conversion problem. It amplifies it. If your site is converting at 1.2% and you double your paid spend, you’re paying significantly more to convert at exactly the same rate. The underlying issue doesn’t move.

More traffic is the right answer when the conversion experience is already working well and you’re ready to scale what’s proven. It’s a very expensive answer when it isn’t. And yet the default response to a revenue shortfall is almost always spend, not diagnosis.

“Add-to-cart rate is all we need to track”

This one is quieter than the others but just as damaging.

Most CRO programmes default to add-to-cart rate as their primary success metric. It’s visible, it moves relatively quickly, and it feels like a direct proxy for purchase intent. The problem is that it isn’t always. A test can show a softer add-to-cart rate and still be the stronger result, if the customers who do add are spending meaningfully more, the net revenue impact is positive. Optimise purely for ATC and you’d call that test a loss.

The inverse is also true. A test that lifts add-to-cart rate while quietly suppressing average order value can look like a win on the dashboard while actually diluting revenue per visitor. Teams measuring a single metric in isolation miss this entirely.

The metric you choose to optimise for shapes every decision downstream, which tests you run, which variants you call winners, which hypotheses you pursue next. In a market where basket values and purchase frequency vary significantly across demographics, revenue per visitor is almost always the more honest measure of whether something is actually working.

The layer most CRO programmes miss entirely

The GCC isn’t a single market with a single consumer profile. It’s a region of significant demographic complexity and that complexity plays out directly in how people browse, evaluate, and decide to buy.

Seasonal behaviour alone should give most teams pause. Ramadan, Eid, Diwali, Dubai Shopping Festival, national days across multiple countries represent genuine, recurring shifts in consumer intent, urgency, and purchasing behaviour. Audiences that behave one way in January behave quite differently in March. A testing calendar built without accounting for this rhythm will produce results that are at best noisy, at worst actively misleading.

Then there’s the trust signal question, and this is where assumptions tend to be most costly. Trust matters enormously in e-commerce, and teams in this region are right to prioritise it.

But trust isn’t a single lever you pull once. Where you place trust signals, and at which point in the journey, changes everything. We’ve seen well-designed trust messaging significantly underperform not because it was wrong, but because it was deployed at a stage where the customer had already moved past needing it. The same signals, repositioned closer to the moments of actual anxiety, payment, delivery commitment, returns, tell a completely different story.

Better questions before better tests

The brands performing well on conversion in this region aren’t necessarily running more tests than everyone else. They’re asking better questions before they run any.

What does our audience actually look like, not assumed, but evidenced in the data? Where are the real friction points, and do they differ by segment? What does trust mean to our specific customer, and are we delivering it at the moments that actually matter?

CRO that starts with those questions tends to produce tests that move numbers.

At HEMOdata, we help e-commerce teams experiment meaningfully, starting with a diagnostic process built for the real complexity of this market. If your conversion programme feels busy but isn’t moving the needle, it might be worth looking at the foundation first.